Microsoft Mvc 5 and Ef 6 Architecture Review Service Providers

This browser is no longer supported.

Upgrade to Microsoft Edge to have advantage of the latest features, security updates, and technical back up.

Working with Data in ASP.NET Core Apps

Tip

This content is an excerpt from the eBook, Architect Modernistic Web Applications with ASP.NET Core and Azure, bachelor on .Internet Docs or every bit a free downloadable PDF that can be read offline.

"Data is a precious matter and will last longer than the systems themselves."

Tim Berners-Lee

Data access is an important role of almost any software application. ASP.Internet Core supports various data access options, including Entity Framework Core (and Entity Framework 6 too), and can work with whatsoever .Internet data access framework. The pick of which data access framework to use depends on the application'south needs. Abstracting these choices from the ApplicationCore and UI projects, and encapsulating implementation details in Infrastructure, helps to produce loosely coupled, testable software.

Entity Framework Cadre (for relational databases)

If you're writing a new ASP.Cyberspace Core awarding that needs to work with relational data, and so Entity Framework Core (EF Core) is the recommended way for your application to access its data. EF Cadre is an object-relational mapper (O/RM) that enables .NET developers to persist objects to and from a data source. Information technology eliminates the need for most of the data access code developers would typically need to write. Like ASP.Internet Cadre, EF Core has been rewritten from the ground up to support modular cross-platform applications. You add it to your awarding as a NuGet package, configure information technology during app startup, and asking information technology through dependency injection wherever you need it.

To use EF Cadre with a SQL Server database, run the following dotnet CLI command:

dotnet add together package Microsoft.EntityFrameworkCore.SqlServer To add support for an InMemory data source, for testing:

dotnet add bundle Microsoft.EntityFrameworkCore.InMemory The DbContext

To work with EF Cadre, you demand a bracket of DbContext. This course holds backdrop representing collections of the entities your application volition work with. The eShopOnWeb sample includes a CatalogContext with collections for items, brands, and types:

public class CatalogContext : DbContext { public CatalogContext(DbContextOptions<CatalogContext> options) : base(options) { } public DbSet<CatalogItem> CatalogItems { get; gear up; } public DbSet<CatalogBrand> CatalogBrands { get; set; } public DbSet<CatalogType> CatalogTypes { become; fix; } } Your DbContext must accept a constructor that accepts DbContextOptions and pass this argument to the base DbContext constructor. If you have only one DbContext in your application, you tin can pass an case of DbContextOptions, simply if you have more than ane you must use the generic DbContextOptions<T> type, passing in your DbContext blazon as the generic parameter.

Configuring EF Cadre

In your ASP.NET Core awarding, you'll typically configure EF Core in your ConfigureServices method. EF Core uses a DbContextOptionsBuilder, which supports several helpful extension methods to streamline its configuration. To configure CatalogContext to use a SQL Server database with a connectedness string divers in Configuration, y'all would add together the following code to ConfigureServices:

services.AddDbContext<CatalogContext>(options => options.UseSqlServer (Configuration.GetConnectionString("DefaultConnection"))); To use the in-retentiveness database:

services.AddDbContext<CatalogContext>(options => options.UseInMemoryDatabase()); Once you have installed EF Core, created a DbContext child type, and configured it in ConfigureServices, you lot are ready to use EF Core. You tin request an instance of your DbContext type in any service that needs it, and start working with your persisted entities using LINQ every bit if they were simply in a collection. EF Cadre does the piece of work of translating your LINQ expressions into SQL queries to store and call back your data.

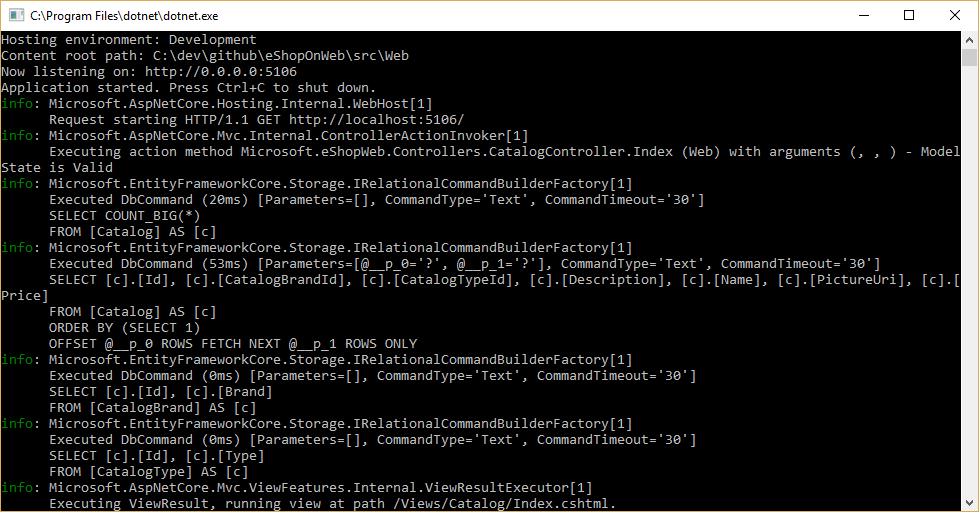

You tin encounter the queries EF Cadre is executing by configuring a logger and ensuring its level is ready to at least Information, equally shown in Figure 8-1.

Figure 8-1. Logging EF Core queries to the console

Fetching and storing Data

To think information from EF Core, you access the appropriate property and use LINQ to filter the outcome. You can also use LINQ to perform projection, transforming the upshot from one type to another. The following example would retrieve CatalogBrands, ordered past proper name, filtered by their Enabled property, and projected onto a SelectListItem blazon:

var brandItems = await _context.CatalogBrands .Where(b => b.Enabled) .OrderBy(b => b.Name) .Select(b => new SelectListItem { Value = b.Id, Text = b.Name }) .ToListAsync(); It's important in the to a higher place example to add the call to ToListAsync in order to execute the query immediately. Otherwise, the statement will assign an IQueryable<SelectListItem> to brandItems, which volition non exist executed until information technology is enumerated. There are pros and cons to returning IQueryable results from methods. Information technology allows the query EF Cadre volition construct to be further modified, but tin can also result in errors that but occur at run time, if operations are added to the query that EF Cadre cannot translate. It's generally safer to pass any filters into the method performing the information access, and return back an in-memory collection (for example, List<T>) as the result.

EF Core tracks changes on entities it fetches from persistence. To save changes to a tracked entity, you just phone call the SaveChanges method on the DbContext, making certain information technology'southward the same DbContext case that was used to fetch the entity. Adding and removing entities is direct done on the advisable DbSet holding, again with a phone call to SaveChanges to execute the database commands. The following instance demonstrates adding, updating, and removing entities from persistence.

// create var newBrand = new CatalogBrand() { Brand = "Top" }; _context.Add(newBrand); await _context.SaveChangesAsync(); // read and update var existingBrand = _context.CatalogBrands.Notice(ane); existingBrand.Make = "Updated Make"; await _context.SaveChangesAsync(); // read and delete (alternate Find syntax) var brandToDelete = _context.Find<CatalogBrand>(ii); _context.CatalogBrands.Remove(brandToDelete); look _context.SaveChangesAsync(); EF Core supports both synchronous and async methods for fetching and saving. In spider web applications, it's recommended to employ the async/await design with the async methods, so that web server threads are not blocked while waiting for data access operations to complete.

Fetching related data

When EF Core retrieves entities, it populates all of the properties that are stored direct with that entity in the database. Navigation backdrop, such equally lists of related entities, are non populated and may accept their value set to zero. This procedure ensures EF Cadre is not fetching more data than is needed, which is especially important for web applications, which must apace process requests and return responses in an efficient manner. To include relationships with an entity using eager loading, you lot specify the property using the Include extension method on the query, as shown:

// .Include requires using Microsoft.EntityFrameworkCore var brandsWithItems = await _context.CatalogBrands .Include(b => b.Items) .ToListAsync(); Yous can include multiple relationships, and you tin besides include subrelationships using ThenInclude. EF Core will execute a unmarried query to retrieve the resulting ready of entities. Alternately you can include navigation properties of navigation properties by passing a '.'-separated string to the .Include() extension method, like so:

.Include("Items.Products") In addition to encapsulating filtering logic, a specification tin can specify the shape of the information to exist returned, including which properties to populate. The eShopOnWeb sample includes several specifications that demonstrate encapsulating eager loading information within the specification. You tin can see how the specification is used as part of a query here:

// Includes all expression-based includes query = specification.Includes.Aggregate(query, (current, include) => electric current.Include(include)); // Include any cord-based include statements query = specification.IncludeStrings.Amass(query, (current, include) => current.Include(include)); Another option for loading related data is to use explicit loading. Explicit loading allows you to load additional data into an entity that has already been retrieved. Since this approach involves a separate request to the database, it's not recommended for web applications, which should minimize the number of database round trips fabricated per asking.

Lazy loading is a feature that automatically loads related data as it is referenced by the awarding. EF Core has added support for lazy loading in version 2.1. Lazy loading is not enabled by default and requires installing the Microsoft.EntityFrameworkCore.Proxies. Equally with explicit loading, lazy loading should typically be disabled for spider web applications, since its utilise will event in additional database queries being fabricated within each web asking. Unfortunately, the overhead incurred by lazy loading oft goes unnoticed at development fourth dimension, when the latency is modest and often the data sets used for testing are minor. However, in product, with more than users, more data, and more latency, the additional database requests tin oftentimes result in poor performance for web applications that make heavy utilize of lazy loading.

Avoid Lazy Loading Entities in Web Applications

Encapsulating data

EF Core supports several features that allow your model to properly encapsulate its state. A common trouble in domain models is that they expose collection navigation backdrop as publicly attainable list types. This problem allows any collaborator to manipulate the contents of these collection types, which may bypass important business rules related to the collection, maybe leaving the object in an invalid state. The solution to this problem is to expose read-but access to related collections, and explicitly provide methods defining ways in which clients can manipulate them, as in this instance:

public grade Basket : BaseEntity { public string BuyerId { go; set; } private readonly List<BasketItem> _items = new List<BasketItem>(); public IReadOnlyCollection<BasketItem> Items => _items.AsReadOnly(); public void AddItem(int catalogItemId, decimal unitPrice, int quantity = 1) { if (!Items.Any(i => i.CatalogItemId == catalogItemId)) { _items.Add together(new BasketItem() { CatalogItemId = catalogItemId, Quantity = quantity, UnitPrice = unitPrice }); return; } var existingItem = Items.FirstOrDefault(i => i.CatalogItemId == catalogItemId); existingItem.Quantity += quantity; } } This entity type doesn't betrayal a public List or ICollection property, but instead exposes an IReadOnlyCollection type that wraps the underlying List type. When using this pattern, you can betoken to Entity Framework Core to use the backing field like so:

private void ConfigureBasket(EntityTypeBuilder<Basket> architect) { var navigation = builder.Metadata.FindNavigation(nameof(Basket.Items)); navigation.SetPropertyAccessMode(PropertyAccessMode.Field); } Another way in which you can meliorate your domain model is by using value objects for types that lack identity and are simply distinguished past their properties. Using such types as properties of your entities tin can help keep logic specific to the value object where it belongs, and tin can avert duplicate logic between multiple entities that use the same concept. In Entity Framework Core, you can persist value objects in the same table as their owning entity by configuring the type as an owned entity, similar and then:

private void ConfigureOrder(EntityTypeBuilder<Order> builder) { architect.OwnsOne(o => o.ShipToAddress); } In this example, the ShipToAddress holding is of type Address. Accost is a value object with several properties such as Street and City. EF Core maps the Club object to its table with one cavalcade per Address holding, prefixing each column name with the name of the holding. In this example, the Order table would include columns such as ShipToAddress_Street and ShipToAddress_City. Information technology's also possible to store endemic types in carve up tables, if desired.

Larn more than about endemic entity support in EF Core.

Resilient connections

External resources like SQL databases may occasionally be unavailable. In cases of temporary unavailability, applications can utilize retry logic to avert raising an exception. This technique is commonly referred to as connection resiliency. Y'all can implement your own retry with exponential backoff technique by attempting to retry with an exponentially increasing look time, until a maximum retry count has been reached. This technique embraces the fact that cloud resources might intermittently be unavailable for brusk periods of time, resulting in the failure of some requests.

For Azure SQL DB, Entity Framework Core already provides internal database connection resiliency and retry logic. But y'all need to enable the Entity Framework execution strategy for each DbContext connection if you want to have resilient EF Core connections.

For case, the following code at the EF Cadre connection level enables resilient SQL connections that are retried if the connection fails.

builder.Services.AddDbContext<OrderingContext>(options => { options.UseSqlServer(builder.Configuration["ConnectionString"], sqlServerOptionsAction: sqlOptions => { sqlOptions.EnableRetryOnFailure( maxRetryCount: v, maxRetryDelay: TimeSpan.FromSeconds(30), errorNumbersToAdd: null); } ); }); Execution strategies and explicit transactions using BeginTransaction and multiple DbContexts

When retries are enabled in EF Core connections, each operation yous perform using EF Cadre becomes its own retryable operation. Each query and each call to SaveChanges will exist retried as a unit if a transient failure occurs.

Still, if your code initiates a transaction using BeginTransaction, you are defining your own group of operations that need to exist treated as a unit; everything inside the transaction has to be rolled back if a failure occurs. You will see an exception like the following if you attempt to execute that transaction when using an EF execution strategy (retry policy) and you include several SaveChanges from multiple DbContexts in it.

System.InvalidOperationException: The configured execution strategy 'SqlServerRetryingExecutionStrategy' does non support user initiated transactions. Employ the execution strategy returned by 'DbContext.Database.CreateExecutionStrategy()' to execute all the operations in the transaction equally a retryable unit.

The solution is to manually invoke the EF execution strategy with a consul representing everything that needs to be executed. If a transient failure occurs, the execution strategy will invoke the consul again. The following lawmaking shows how to implement this approach:

// Use of an EF Core resiliency strategy when using multiple DbContexts // within an explicit transaction // See: // https://docs.microsoft.com/ef/core/miscellaneous/connection-resiliency var strategy = _catalogContext.Database.CreateExecutionStrategy(); await strategy.ExecuteAsync(async () => { // Achieving atomicity betwixt original Catalog database operation and the // IntegrationEventLog cheers to a local transaction using (var transaction = _catalogContext.Database.BeginTransaction()) { _catalogContext.CatalogItems.Update(catalogItem); await _catalogContext.SaveChangesAsync(); // Save to EventLog only if product price changed if (raiseProductPriceChangedEvent) { await _integrationEventLogService.SaveEventAsync(priceChangedEvent); transaction.Commit(); } } }); The first DbContext is the _catalogContext and the second DbContext is within the _integrationEventLogService object. Finally, the Commit action would exist performed multiple DbContexts and using an EF Execution Strategy.

References – Entity Framework Core

- EF Cadre Docs https://docs.microsoft.com/ef/

- EF Core: Related Data https://docs.microsoft.com/ef/cadre/querying/related-information

- Avoid Lazy Loading Entities in ASPNET Applications https://ardalis.com/avoid-lazy-loading-entities-in-asp-net-applications

EF Core or micro-ORM?

While EF Core is a great choice for managing persistence, and for the most function encapsulates database details from application developers, it isn't the but choice. Another popular open-source alternative is Dapper, a so-called micro-ORM. A micro-ORM is a lightweight, less full-featured tool for mapping objects to data structures. In the case of Dapper, its blueprint goals focus on functioning, rather than fully encapsulating the underlying queries it uses to retrieve and update data. Because it doesn't abstract SQL from the developer, Dapper is "closer to the metal" and lets developers write the exact queries they want to use for a given information admission operation.

EF Core has two meaning features it provides which separate information technology from Dapper just also add to its operation overhead. The first is the translation from LINQ expressions into SQL. These translations are buried, simply however at that place is overhead in performing them the first time. The second is change tracking on entities (so that efficient update statements can be generated). This beliefs can be turned off for specific queries by using the AsNoTracking extension. EF Core as well generates SQL queries that usually are very efficient and in any instance perfectly acceptable from a performance standpoint, but if you need fine command over the precise query to exist executed, you can laissez passer in custom SQL (or execute a stored procedure) using EF Cadre, as well. In this case, Dapper still outperforms EF Core, merely only very slightly. Julie Lerman presents some functioning data in her May 2016 MSDN commodity Dapper, Entity Framework, and Hybrid Apps. Additional operation benchmark data for a variety of information access methods tin can be constitute on the Dapper site.

To see how the syntax for Dapper varies from EF Core, consider these two versions of the aforementioned method for retrieving a listing of items:

// EF Core private readonly CatalogContext _context; public async Task<IEnumerable<CatalogType>> GetCatalogTypes() { render await _context.CatalogTypes.ToListAsync(); } // Dapper private readonly SqlConnection _conn; public async Job<IEnumerable<CatalogType>> GetCatalogTypesWithDapper() { return look _conn.QueryAsync<CatalogType>("SELECT * FROM CatalogType"); } If you need to build more than complex object graphs with Dapper, y'all need to write the associated queries yourself (as opposed to adding an Include as you would in EF Core). This functionality is supported through various syntaxes, including a feature called Multi Mapping that lets you map individual rows to multiple mapped objects. For case, given a class Post with a property Owner of type User, the following SQL would return all of the necessary information:

select * from #Posts p left join #Users u on u.Id = p.OwnerId Order past p.Id Each returned row includes both User and Post data. Since the User data should exist attached to the Mail data via its Owner property, the following function is used:

(post, user) => { postal service.Owner = user; render post; } The total lawmaking listing to return a collection of posts with their Owner property populated with the associated user data would be:

var sql = @"select * from #Posts p left join #Users u on u.Id = p.OwnerId Order by p.Id"; var data = connection.Query<Mail, User, Post>(sql, (post, user) => { post.Owner = user; return post;}); Because it offers less encapsulation, Dapper requires developers know more well-nigh how their information is stored, how to query it efficiently, and write more than code to fetch it. When the model changes, instead of merely creating a new migration (another EF Core feature), and/or updating mapping information in one place in a DbContext, every query that is impacted must exist updated. These queries have no compile-fourth dimension guarantees, and so they may break at run fourth dimension in response to changes to the model or database, making errors more hard to detect quickly. In exchange for these tradeoffs, Dapper offers extremely fast operation.

For most applications, and about parts of almost all applications, EF Core offers acceptable performance. Thus, its developer productivity benefits are probable to outweigh its functioning overhead. For queries that can benefit from caching, the actual query may only exist executed a tiny per centum of the time, making relatively pocket-size query performance differences moot.

SQL or NoSQL

Traditionally, relational databases like SQL Server have dominated the marketplace for persistent data storage, but they are not the only solution available. NoSQL databases like MongoDB offer a unlike approach to storing objects. Rather than mapping objects to tables and rows, some other option is to serialize the unabridged object graph, and store the outcome. The benefits of this arroyo, at to the lowest degree initially, are simplicity and performance. Information technology'south simpler to store a single serialized object with a key than to decompose the object into many tables with relationships and update rows that may have changed since the object was last retrieved from the database. Too, fetching and deserializing a single object from a key-based store is typically much faster and easier than complex joins or multiple database queries required to fully compose the aforementioned object from a relational database. The lack of locks or transactions or a fixed schema also makes NoSQL databases amenable to scaling beyond many machines, supporting very large datasets.

On the other hand, NoSQL databases (equally they are typically called) have their drawbacks. Relational databases apply normalization to enforce consistency and avoid duplication of data. This approach reduces the total size of the database and ensures that updates to shared information are available immediately throughout the database. In a relational database, an Accost tabular array might reference a State table past ID, such that if the name of a land/region were inverse, the address records would do good from the update without themselves having to exist updated. Even so, in a NoSQL database, Accost, and its associated Country might be serialized equally part of many stored objects. An update to a land/region name would require all such objects to be updated, rather than a single row. Relational databases can also ensure relational integrity past enforcing rules similar foreign keys. NoSQL databases typically practise not offer such constraints on their data.

Another complexity NoSQL databases must deal with is versioning. When an object's backdrop alter, it may not be able to be deserialized from by versions that were stored. Thus, all existing objects that have a serialized (previous) version of the object must be updated to conform to its new schema. This approach is non conceptually dissimilar from a relational database, where schema changes sometimes require update scripts or mapping updates. Still, the number of entries that must exist modified is often much greater in the NoSQL approach, because in that location is more duplication of information.

It's possible in NoSQL databases to store multiple versions of objects, something fixed schema relational databases typically do not support. All the same, in this case, your application code will need to business relationship for the being of previous versions of objects, calculation additional complexity.

NoSQL databases typically do not enforce Acid, which means they have both functioning and scalability benefits over relational databases. They're well suited to extremely big datasets and objects that are non well suited to storage in normalized table structures. There is no reason why a single application cannot take advantage of both relational and NoSQL databases, using each where it is all-time suited.

Azure Cosmos DB

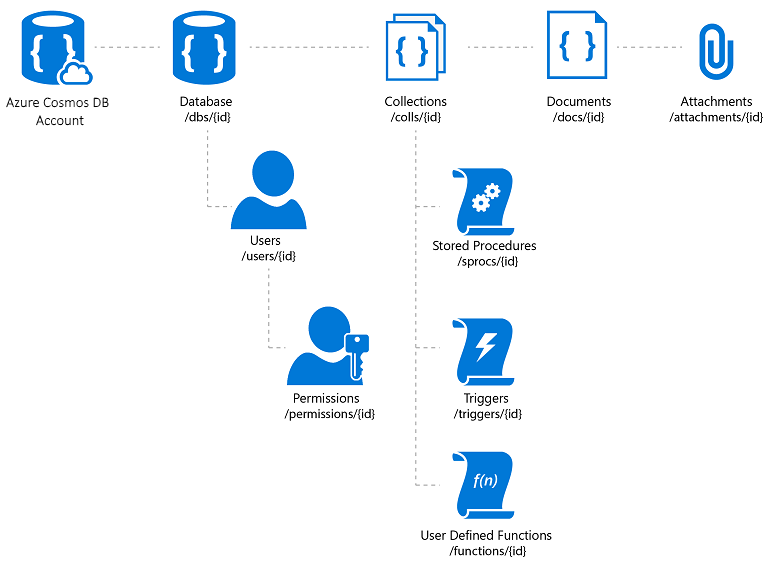

Azure Cosmos DB is a fully managed NoSQL database service that offers cloud-based schema-free data storage. Azure Cosmos DB is congenital for fast and predictable functioning, high availability, elastic scaling, and global distribution. Despite existence a NoSQL database, developers tin can utilise rich and familiar SQL query capabilities on JSON information. All resources in Azure Cosmos DB are stored equally JSON documents. Resources are managed every bit items, which are documents containing metadata, and feeds, which are collections of items. Effigy 8-two shows the relationship betwixt different Azure Cosmos DB resource.

Figure 8-2. Azure Cosmos DB resource organization.

The Azure Cosmos DB query language is a simple yet powerful interface for querying JSON documents. The language supports a subset of ANSI SQL grammar and adds deep integration of JavaScript object, arrays, object construction, and function invocation.

References – Azure Creation DB

- Azure Cosmos DB Introduction https://docs.microsoft.com/azure/cosmos-db/introduction

Other persistence options

In addition to relational and NoSQL storage options, ASP.NET Core applications can use Azure Storage to store various information formats and files in a cloud-based, scalable style. Azure Storage is massively scalable, and so you can start out storing pocket-sized amounts of data and calibration upward to storing hundreds or terabytes if your application requires it. Azure Storage supports four kinds of data:

-

Hulk Storage for unstructured text or binary storage, also referred to as object storage.

-

Table Storage for structured datasets, accessible via row keys.

-

Queue Storage for reliable queue-based messaging.

-

File Storage for shared file access between Azure virtual machines and on-premises applications.

References – Azure Storage

- Azure Storage Introduction https://docs.microsoft.com/azure/storage/common/storage-introduction

Caching

In web applications, each spider web request should exist completed in the shortest time possible. I way to achieve this functionality is to limit the number of external calls the server must make to complete the request. Caching involves storing a copy of information on the server (or another data shop that is more easily queried than the source of the data). Spider web applications, and especially not-SPA traditional web applications, need to build the entire user interface with every request. This arroyo ofttimes involves making many of the same database queries repeatedly from ane user request to the next. In most cases, this data changes rarely, so there is petty reason to constantly asking it from the database. ASP.NET Cadre supports response caching, for caching entire pages, and data caching, which supports more granular caching behavior.

When implementing caching, it's important to continue in listen separation of concerns. Avert implementing caching logic in your data admission logic, or in your user interface. Instead, encapsulate caching in its own classes, and use configuration to manage its behavior. This arroyo follows the Open/Closed and Unmarried Responsibleness principles, and will arrive easier for you to manage how y'all apply caching in your application every bit it grows.

ASP.NET Core response caching

ASP.NET Core supports two levels of response caching. The first level does non cache anything on the server, but adds HTTP headers that instruct clients and proxy servers to cache responses. This functionality is implemented past adding the ResponseCache attribute to private controllers or actions:

[ResponseCache(Duration = 60)] public IActionResult Contact() { ViewData["Bulletin"] = "Your contact page."; render View(); } The previous example will outcome in the following header being added to the response, instructing clients to cache the result for up to sixty seconds.

Enshroud-Control: public,max-age=60 In order to add together server-side in-retention caching to the application, you must reference the Microsoft.AspNetCore.ResponseCaching NuGet package, and then add the Response Caching middleware. This middleware is configured with services and middleware during app startup:

builder.Services.AddResponseCaching(); // other code omitted, including building the app app.UseResponseCaching(); The Response Caching Middleware will automatically cache responses based on a ready of conditions, which yous can customize. By default, only 200 (OK) responses requested via GET or HEAD methods are cached. In addition, requests must have a response with a Cache-Control: public header, and cannot include headers for Authorization or Prepare-Cookie. See a complete list of the caching atmospheric condition used past the response caching middleware.

Data caching

Rather than (or in addition to) caching full web responses, y'all tin can enshroud the results of individual data queries. For this functionality, you tin employ in memory caching on the web server, or use a distributed cache. This department will demonstrate how to implement in memory caching.

You add support for memory (or distributed) caching in ConfigureServices:

architect.Services.AddMemoryCache(); architect.Services.AddMvc(); } Be sure to add the Microsoft.Extensions.Caching.Memory NuGet package equally well.

Once you've added the service, yous request IMemoryCache via dependency injection wherever you need to access the enshroud. In this example, the CachedCatalogService is using the Proxy (or Decorator) design pattern, by providing an alternative implementation of ICatalogService that controls access to (or adds behavior to) the underlying CatalogService implementation.

public class CachedCatalogService : ICatalogService { individual readonly IMemoryCache _cache; individual readonly CatalogService _catalogService; private static readonly string _brandsKey = "brands"; private static readonly string _typesKey = "types"; private static readonly TimeSpan _defaultCacheDuration = TimeSpan.FromSeconds(thirty); public CachedCatalogService( IMemoryCache cache, CatalogService catalogService) { _cache = enshroud; _catalogService = catalogService; } public async Task<IEnumerable<SelectListItem>> GetBrands() { render await _cache.GetOrCreateAsync(_brandsKey, async entry => { entry.SlidingExpiration = _defaultCacheDuration; return await _catalogService.GetBrands(); }); } public async Task<Catalog> GetCatalogItems(int pageIndex, int itemsPage, int? brandID, int? typeId) { string cacheKey = $"items-{pageIndex}-{itemsPage}-{brandID}-{typeId}"; render await _cache.GetOrCreateAsync(cacheKey, async entry => { entry.SlidingExpiration = _defaultCacheDuration; return expect _catalogService.GetCatalogItems(pageIndex, itemsPage, brandID, typeId); }); } public async Task<IEnumerable<SelectListItem>> GetTypes() { return look _cache.GetOrCreateAsync(_typesKey, async entry => { entry.SlidingExpiration = _defaultCacheDuration; return await _catalogService.GetTypes(); }); } } To configure the application to use the buried version of the service, but notwithstanding let the service to become the case of CatalogService it needs in its constructor, y'all would add the following lines in ConfigureServices:

builder.Services.AddMemoryCache(); builder.Services.AddScoped<ICatalogService, CachedCatalogService>(); builder.Services.AddScoped<CatalogService>(); With this lawmaking in place, the database calls to fetch the catalog data will only be made one time per infinitesimal, rather than on every request. Depending on the traffic to the site, this tin take a significant impact on the number of queries made to the database, and the average page load time for the home page that currently depends on all iii of the queries exposed by this service.

An result that arises when caching is implemented is dried data – that is, data that has changed at the source but an out-of-date version remains in the cache. A simple fashion to mitigate this issue is to utilise small-scale enshroud durations, since for a busy application there is a limited boosted benefit to extending the length data is cached. For example, consider a page that makes a single database query, and is requested ten times per 2nd. If this page is buried for one minute, it will result in the number of database queries made per minute to drop from 600 to one, a reduction of 99.8%. If instead the enshroud duration was made one 60 minutes, the overall reduction would be 99.997%, simply now the likelihood and potential age of stale information are both increased dramatically.

Another approach is to proactively remove cache entries when the data they contain is updated. Any individual entry can be removed if its key is known:

_cache.Remove(cacheKey); If your application exposes functionality for updating entries that it caches, you can remove the corresponding enshroud entries in your code that performs the updates. Sometimes in that location may exist many different entries that depend on a particular set of data. In that case, it tin be useful to create dependencies between cache entries, by using a CancellationChangeToken. With a CancellationChangeToken, you can expire multiple enshroud entries at in one case by canceling the token.

// configure CancellationToken and add entry to enshroud var cts = new CancellationTokenSource(); _cache.Set("cts", cts); _cache.Set(cacheKey, itemToCache, new CancellationChangeToken(cts.Token)); // elsewhere, expire the enshroud past cancelling the token\ _cache.Get<CancellationTokenSource>("cts").Cancel(); Caching tin can dramatically improve the performance of spider web pages that repeatedly request the same values from the database. Be sure to measure out data admission and page operation before applying caching, and only utilize caching where yous come across a need for improvement. Caching consumes web server memory resources and increases the complexity of the awarding, so information technology'due south important you don't prematurely optimize using this technique.

Getting data to Blazor WebAssembly apps

If you're building apps that utilize Blazor Server, you can use Entity Framework and other directly data access technologies as they've been discussed thus far in this affiliate. All the same, when building Blazor WebAssembly apps, like other SPA frameworks, you will demand a different strategy for data access. Typically, these applications access information and interact with the server through web API endpoints.

If the data or operations being performed are sensitive, be certain to review the section on security in the previous chapter and protect your APIs confronting unauthorized admission.

Yous'll find an instance of a Blazor WebAssembly app in the eShopOnWeb reference awarding, in the BlazorAdmin project. This projection is hosted within the eShopOnWeb Web project, and allows users in the Administrators grouping to manage the items in the store. You can see a screenshot of the application in Figure 8-3.

Figure 8-3. eShopOnWeb Catalog Admin Screenshot.

When fetching data from web APIs within a Blazor WebAssembly app, you merely apply an case of HttpClient every bit y'all would in whatsoever .NET application. The basic steps involved are to create the asking to send (if necessary, normally for POST or PUT requests), await the request itself, verify the condition code, and deserialize the response. If you're going to make many requests to a given set of APIs, it'southward a good idea to encapsulate your APIs and configure the HttpClient base address centrally. This way, if you need to conform any of these settings between environments, you can make the changes in just one place. Yous should add support for this service in your Plan.Main:

builder.Services.AddScoped(sp => new HttpClient { BaseAddress = new Uri(builder.HostEnvironment.BaseAddress) }); If you need to admission services deeply, you should admission a secure token and configure the HttpClient to pass this token as an Authentication header with every request:

_httpClient.DefaultRequestHeaders.Say-so = new AuthenticationHeaderValue("Bearer", token); This activity can exist washed from any component that has the HttpClient injected into it, provided that HttpClient wasn't added to the application's services with a Transient lifetime. Every reference to HttpClient in the application references the same instance, so changes to it in 1 component flow through the unabridged awarding. A good place to perform this authentication check (followed by specifying the token) is in a shared component like the main navigation for the site. Learn more about this arroyo in the BlazorAdmin project in the eShopOnWeb reference application.

One do good of Blazor WebAssembly over traditional JavaScript SPAs is that you don't need to go along to copies of your data transfer objects(DTOs) synchronized. Your Blazor WebAssembly project and your web API project can both share the same DTOs in a mutual shared projection. This arroyo eliminates some of the friction involved in developing SPAs.

To apace go data from an API endpoint, you can utilise the born helper method, GetFromJsonAsync. There are similar methods for Mail, PUT, etc. The following shows how to get a CatalogItem from an API endpoint using a configured HttpClient in a Blazor WebAssembly app:

var item = await _httpClient.GetFromJsonAsync<CatalogItem>($"catalog-items/{id}"); In one case you accept the data you need, y'all'll typically track changes locally. When you want to make updates to the backend information store, y'all'll call additional spider web APIs for this purpose.

References – Blazor Information

- Phone call a web API from ASP.NET Core Blazor https://docs.microsoft.com/aspnet/cadre/blazor/call-spider web-api

Feedback

Submit and view feedback for

dominguezpedularave.blogspot.com

Source: https://docs.microsoft.com/en-us/dotnet/architecture/modern-web-apps-azure/work-with-data-in-asp-net-core-apps

0 Response to "Microsoft Mvc 5 and Ef 6 Architecture Review Service Providers"

Post a Comment